Just when I thought I had gotten my mind around Big Data, the IT Industry comes up with another twist. “Forget Big Data, Small Data is Where It’s At” (meaning the real action) – screamed the headline of a recent Inc magazine article. Not to appear flat footed, I frantically scoured the web to understand what the hell was this "Small Data" phenomenon all about. Not that it did much good.

A Forbes article defined Small Data as "…a dataset that contains very specific attributes….These small data sets are also ingested into a large data lake where machine-learning algorithms begin to understand patterns". IBM, just to muddy the waters, on its BigDataHub portal, contrasts Small Data as having “batch velocities” and “structured varieties”. Former McKinsey consultant Allen Bonde, and now an executive at OpenText, in an E-Week interview opines “Big Data is about machines, Small Data is about people”

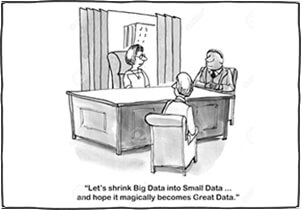

Ingestion, Data Lake, Patterns, Velocities, Varieties, People versus Machines…...sounds all very confusing. Isn’t there a simpler explanation that us ordinary mortals can understand? Or is Small Data another ploy to get businesses to plop up more dollars on IT? Data is data – whether it is Big, Mid, or Small.

You don’t need special tools and techniques to analyze Big Data versus Small Data. Microsoft Excel now supports 1,048,576 rows and 16,384 columns which is about 17 billion cells. Good enough for most big and small data applications. Four terabytes of storage devices cost less than a hundred dollars and can house billions of records.

It’s not the size of the data that matters, but the quality of data. What you really need is Smart Data. You need to make sure that you have compiled data attributes that are predictive and can offer deep, valuable insights.

The best way to illustrate this is to consider the college dropout crisis in the United States. A recent New York Times article – “Personalized Tips from a Counsellor? That’s Priceless” - states that of the sixty percent of enrolled student’s only half graduate. Given the limited resources, a highly targeted student counselling program is one way to address this crisis. Predictive analytics can play a valuable role in identifying high-drop-out-risk students that need help. But the analytics is possible only if you have data attributes that correlate with college drop-out rate.

This NYT article goes on to say that the dropout problem is particularly acute for students whose parents did not attend college. It states “30 percent of first-generation freshmen drop out of school in three years”. So family’s academic history is critical information. There are probably also other attributes that correlate with the “drop-out propensity”. Some of these attributes are intuitive and others may not be – which is why you need mathematical models to discover these correlations.

Bottom line – given the problem at hand – you need to make sure you are compiling relevant data. In general, most predictive models, particularly of the type that Advancement Professionals need, require no more than 15 to 20 data attributes. Key is to make sure your data-collection strategy is smart enough and compiling this data.

Insight brings the power of predictive analytics (sometimes called machine learning or data mining) to fundraising.

Acquire is a multi-channel, integrated communication platform designed to increase contact rates.

Cultivate improves donor retention with personalized communication that speaks to donor's interests & motivation for giving.